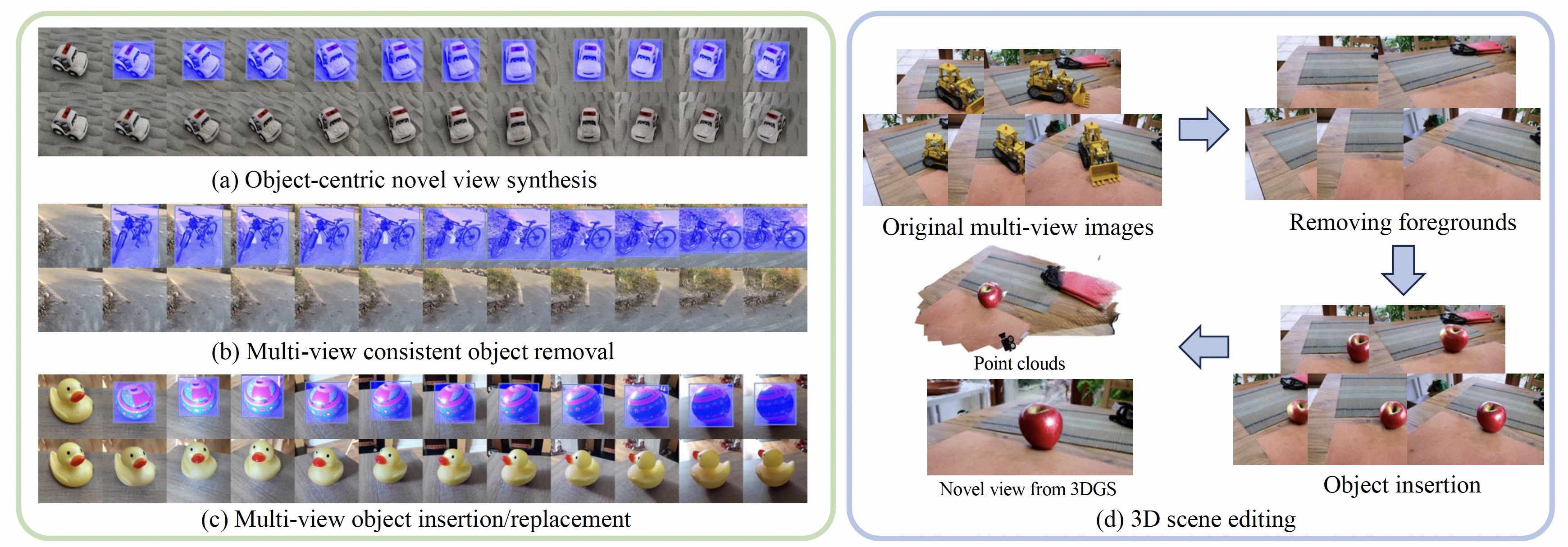

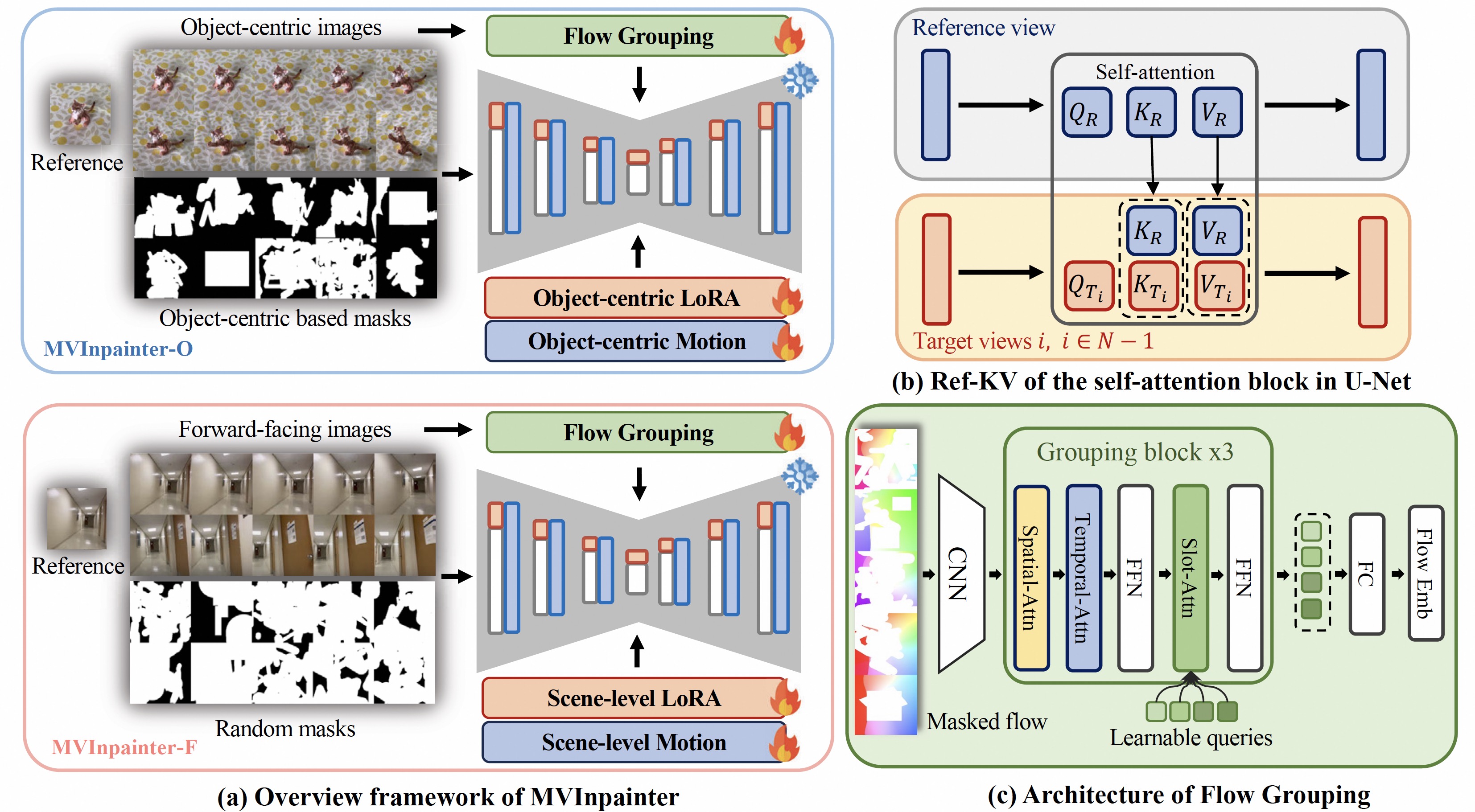

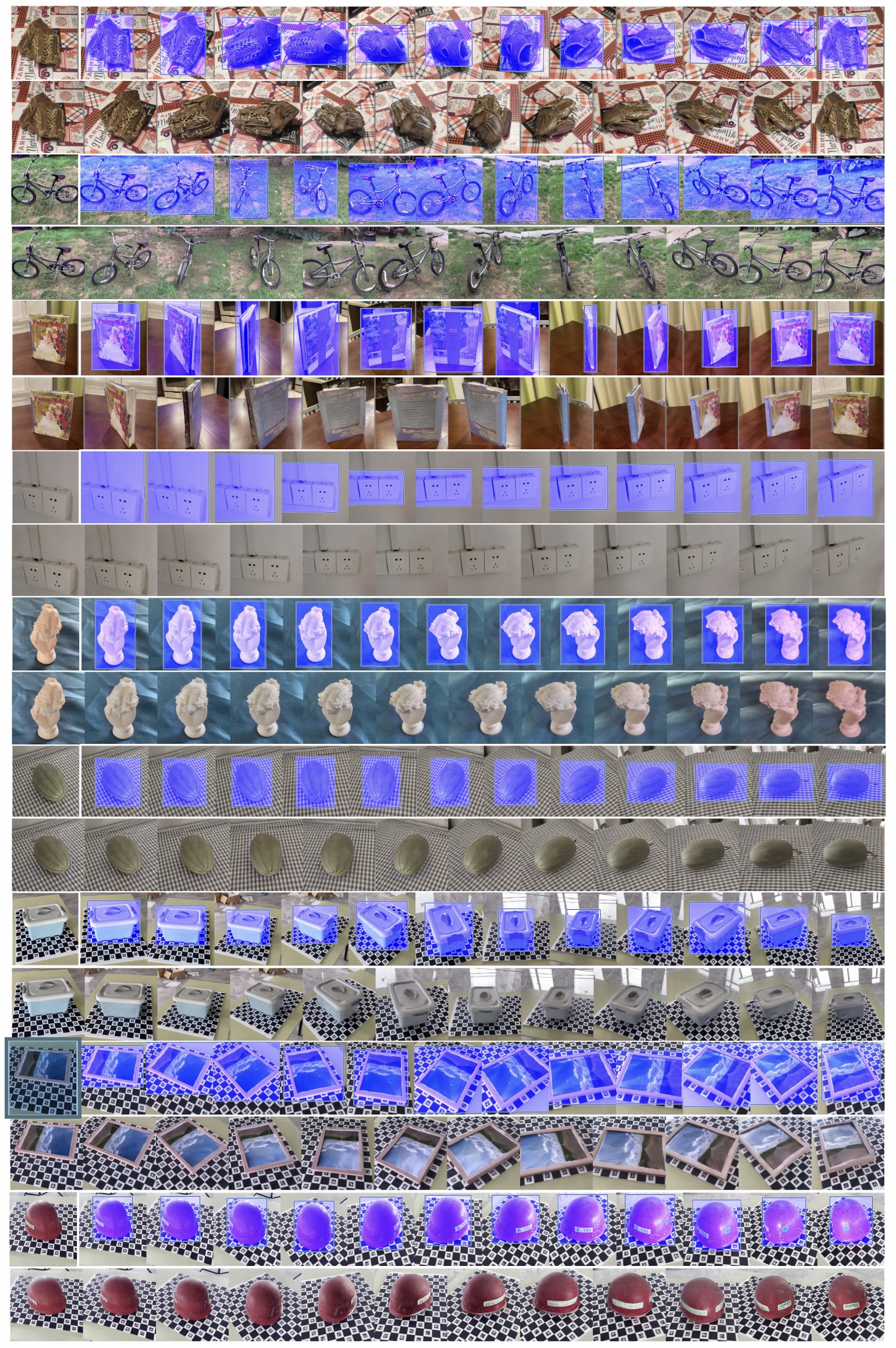

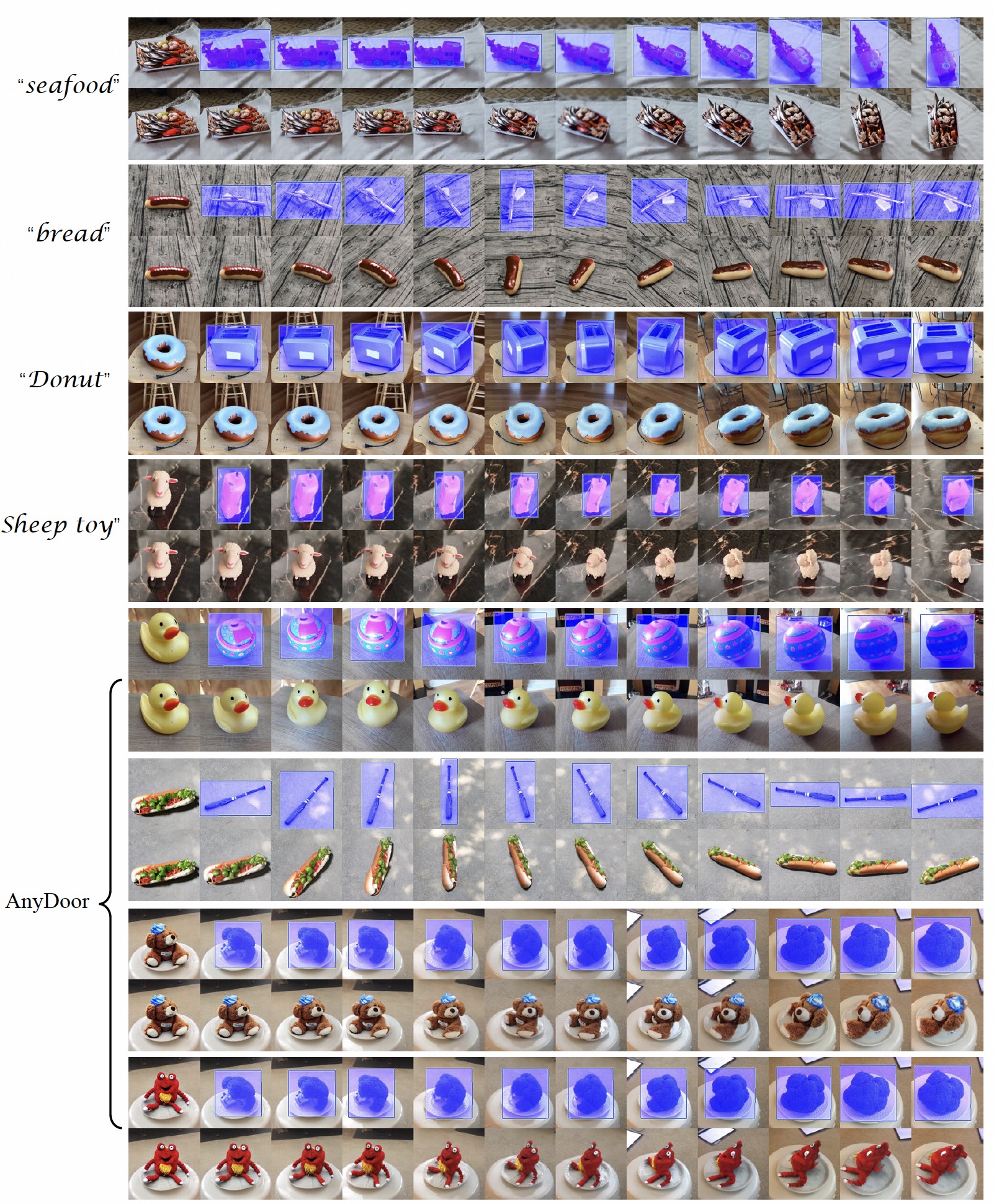

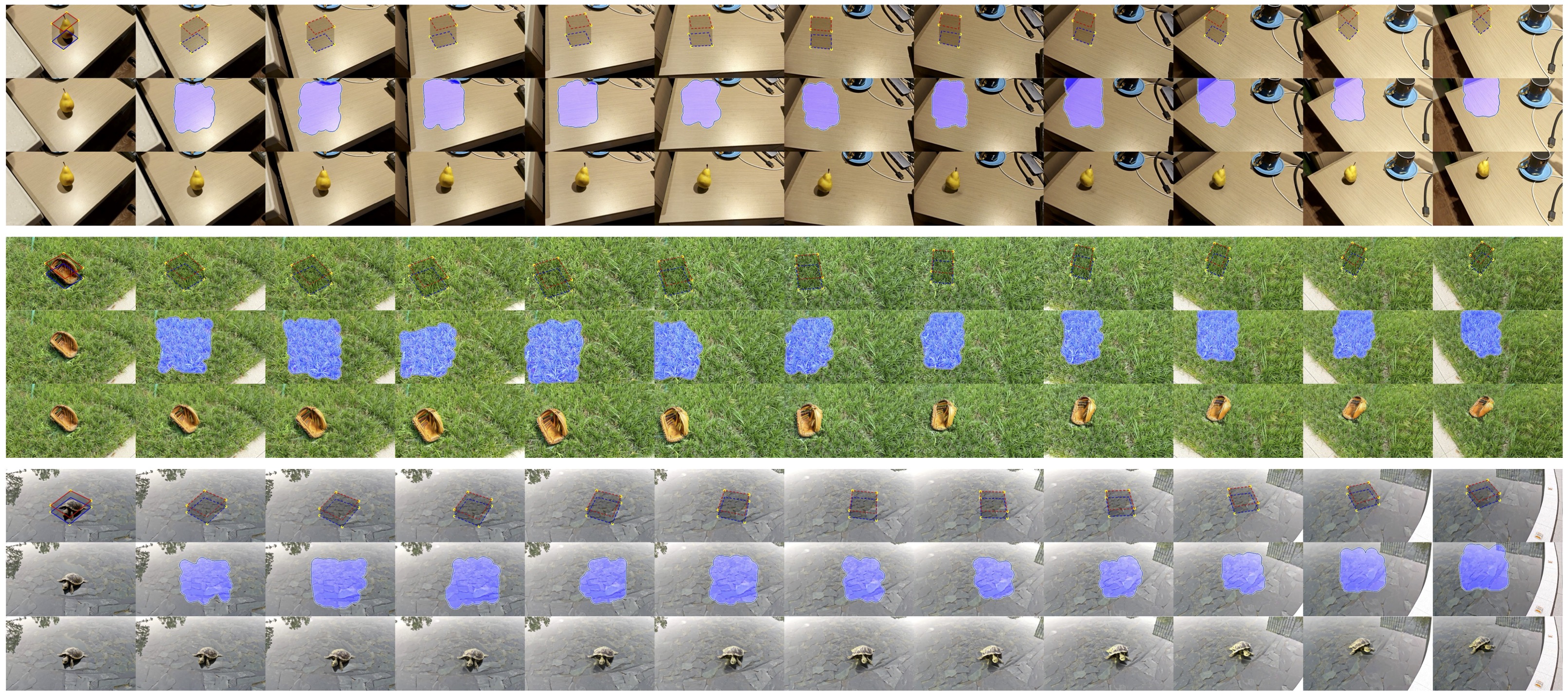

Novel View Synthesis (NVS) and 3D generation have recently achieved prominent improvements. However, these works mainly focus on confined categories or synthetic 3D assets, which are discouraged from generalizing to challenging in-the-wild scenes and fail to be employed with 2D synthesis directly. Moreover, these methods heavily depended on camera poses, limiting their real-world applications. To overcome these issues, we propose MVInpainter, re-formulating the 3D editing as a multi-view 2D inpainting task. Specifically, MVInpainter partially inpaints multi-view images with the reference guidance rather than intractably generating an entirely novel view from scratch, which largely simplifies the difficulty of in-the-wild NVS and leverages unmasked clues instead of explicit pose conditions. To ensure cross-view consistency, MVInpainter is enhanced by video priors from motion components and appearance guidance from concatenated reference key\&value attention. Furthermore, MVInpainter incorporates slot attention to aggregate high-level optical flow features from unmasked regions to control the camera movement with pose-free training and inference. Sufficient scene-level experiments on both object-centric and forward-facing datasets verify the effectiveness of MVInpainter, including diverse tasks, such as multi-view object removal, synthesis, insertion, and replacement.

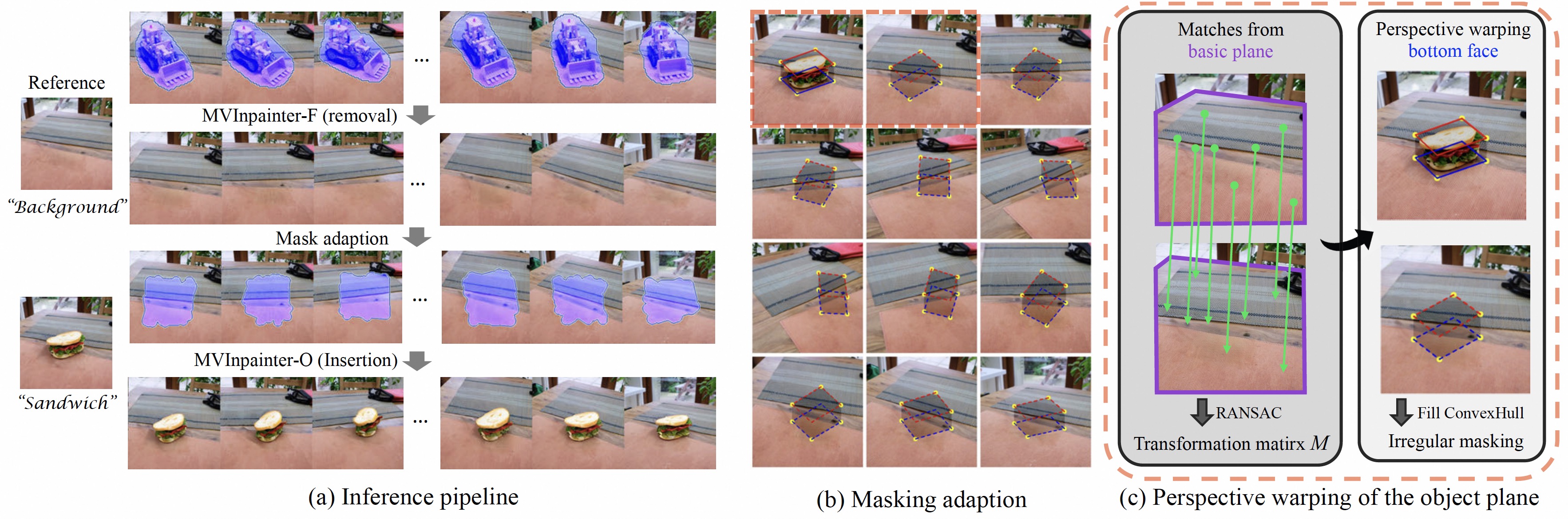

To confirm the mask shape for inference, we propose the masking adaption, starting with a simple 4-point bottom face of the object. We apply the perspective warping through the dense matching of the basic plane to warp mask with correct shapes.

@article{cao2024mvinpainter,

title={MVInpainter: Learning Multi-View Consistent Inpainting to Bridge 2D and 3D Editing},

author={Cao, Chenjie and Yu, Chaohui and Fu, Yanwei and Wang, Fan and Xue, Xiangyang},

journal={arXiv preprint arXiv:2408.08000},

year={2024}

}